_page-0001.jpg)

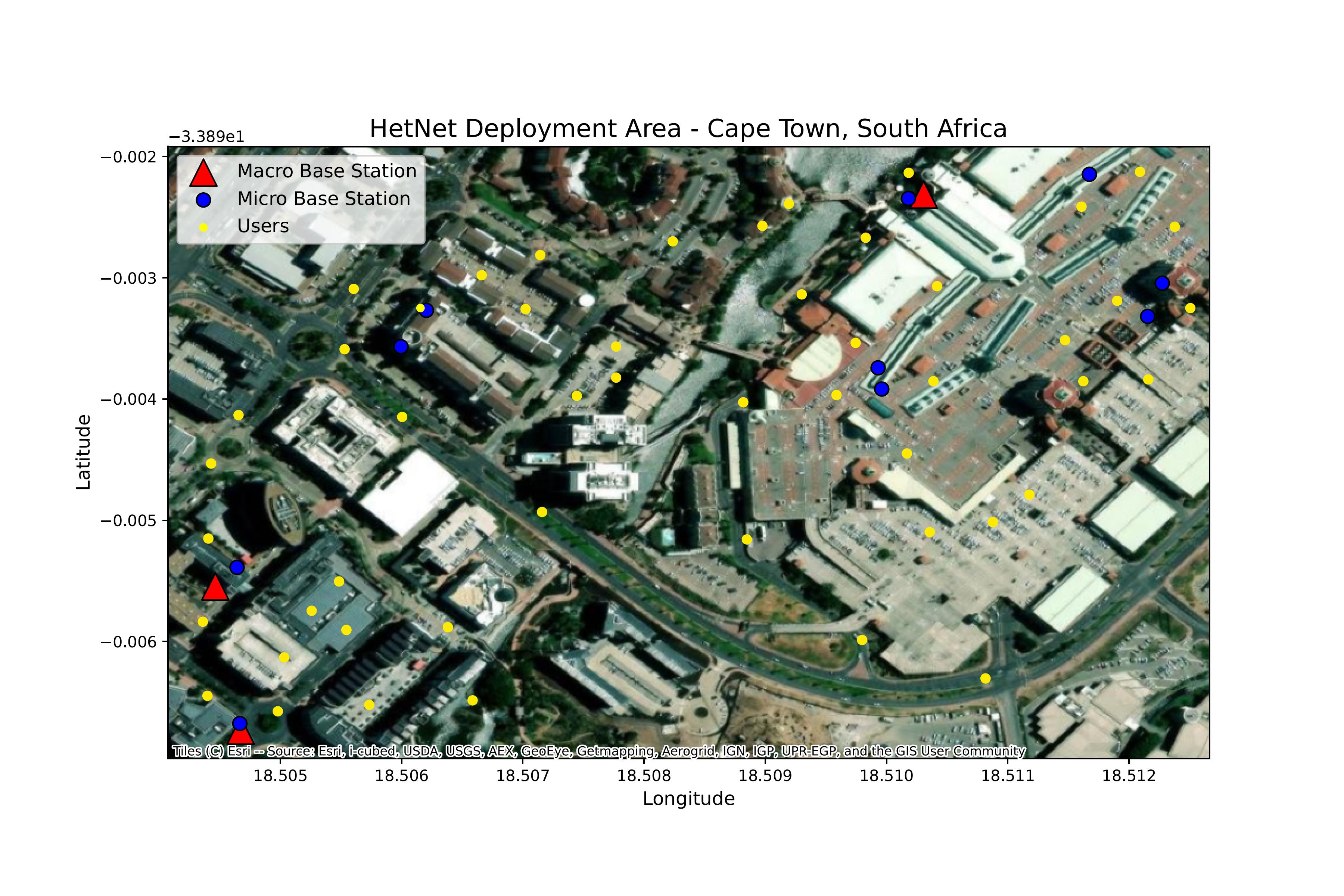

We consider a downlink HetNet operating within an O-RAN architecture.

The network consists of a set of BSs, \(B = \{1, \dots, N_B\}\), comprising \(N_M\)

macro BSs and \(N_S\) micro BSs. These serve a set of user equipments (UEs) \(U = \{1, \dots, N_U\}\)

distributed stochastically within the coverage area.

The system is controlled by a centralised Near-RT

RIC that hosts an xApp responsible for optimising radio resources at discrete

time intervals \(t\)

We formulate the problem as a MDP \((\mathcal{S}, \mathcal{A}, \mathcal{P}, \mathcal{R})\). The agent (xApp) interacts with the environment (E2 nodes) as follows:

\textbf{State Space \(\mathcal{S}\)}: The state \(s_t\) aggregates global network observables available at the RIC: \begin{equation} s_t = \left\{\mathbf{p}_{t-1}, \{\mathbf{I}_{u}^{\rm{est}}\}_{u \in U}, \mathbf{L}_{\rm{geo}}\right\}, \end{equation} where \(\mathbf{p}_{t - 1}\) is the previous power allocation, \(\mathbf{I}_{u}^{\rm{est}}\) is the estimated interference measurement from UE channel quality indicator (CQI) reports, and \(\mathbf{L}_{\rm{geo}}\) encapsulates the fixed topology geometry.

.png)

.png)

.png)

.png)

.png)